#buildinpublic

0 people building today

Tweet your progress with hashtag #buildinpublic to show up here.

Results of the ads made me focus on reaching people organically. So I started to looking for different channels for that 👀

I will be sharing rest of the journey. Stay tuned ⚙️

#buildinpublic #SaaS #ai #Meta #design #startups #stablediffusion #ControlNet

I will be sharing rest of the journey. Stay tuned ⚙️

#buildinpublic #SaaS #ai #Meta #design #startups #stablediffusion #ControlNet

When you’ve reached the Stable Diffusion Basic plan limit in a few days 😅

#buildinpublic #iosdev #stablediffusion

#buildinpublic #iosdev #stablediffusion

Generated and Out-Painted on @pixio_ai the new #stablediffusion image generation tool . Try in now! #AI #DigitalArt #GenerativeArt #ArtificialIntelligence #MachineLearning #AIArtCommunity #AbstractArt #NFT #AIArtists #NeuralArt #VQGAN #GANArt #buildinpublic

1/10 🧵 Ever struggled with crafting prompts for generative AI models like Stable Diffusion? Here are some tips on how to consistently generate high-quality images. Let's dive in!

#stablediffusion #ai #controlnet #buildinpublic

#stablediffusion #ai #controlnet #buildinpublic

2/10 The most important variable in generating quality images is the prompt. Using the right words can significantly improve your results, while a single bad word can lead to an ugly image.

#stablediffusion #ai #controlnet #buildinpublic

#stablediffusion #ai #controlnet #buildinpublic

3/10 Unlike humans or language models like ChatGPT, Stable Diffusion doesn't understand language in the same way. To master prompting, we need to learn the language that Stable Diffusion understands.

#stablediffusion #ai #controlnet #buildinpublic

#stablediffusion #ai #controlnet #buildinpublic

4/10 Stable Diffusion learns to associate certain words with certain types of images. So, the key to a good prompt is to include words that the model associates with the images it was trained on.

#stablediffusion #ai #controlnet #buildinpublic

#stablediffusion #ai #controlnet #buildinpublic

5/10 I generally write prompts in a comma-separated list of words. It can sometimes make sense with incomplete sentences if you need specific elements in the images to have a certain relationship to each other.

#stablediffusion #ai #controlnet #buildinpublic

#stablediffusion #ai #controlnet #buildinpublic

6/10 To make the output look like a photo, add style words to the prompt. These are words that push the output towards a certain style, for example "photo", "RAW", "DSLR". These words will make the output look more like a photo.

#stablediffusion #ai #controlnet #buildinpublic

#stablediffusion #ai #controlnet #buildinpublic

7/10 Improve the quality of the output by adding quality words. These are words that Stable Diffusion associates with high-quality images and can improve the output quality even further. Example: "4k, 8k, UHD, professional"...

#stablediffusion #ai #controlnet #buildinpublic

#stablediffusion #ai #controlnet #buildinpublic

8/10 You can add a negative prompt to push the model away from certain elements or styles. This can be useful in getting more consistency and reducing the likelihood of low-quality images. Example: "bad, ugly, jpeg artifacts"...

#stablediffusion #ai #controlnet #buildinpublic

#stablediffusion #ai #controlnet #buildinpublic

9/10 If the image quality isn't as expected, try different words that might yield a nicer output. Spend time testing out different prompts to improve the quality.

#stablediffusion #ai #controlnet #buildinpublic

#stablediffusion #ai #controlnet #buildinpublic

10/10 The key takeaway is that Stable Diffusion doesn't understand language the same way we humans do. Instead of telling it what we want, we have to build up a prompt with words that the model associates with what we want.

#stablediffusion #ai #controlnet #buildinpublic

#stablediffusion #ai #controlnet #buildinpublic

Added `description` feature.

#BuildInPublic #stablediffusion #AI #domain #HNS #handshake #ICANN #LLM #MachineLearning #PromptEngineering

#BuildInPublic #stablediffusion #AI #domain #HNS #handshake #ICANN #LLM #MachineLearning #PromptEngineering

Before-After

Domain Card Generator (using Stable Diffusion AI)

🎨🖼️

For Blockchain & ICANN Domainers 🫡🫡

#BuildInPublic #AI #LLM #Python #JS #StableDiffusion twitter.com/DotDwebo/statu…

Domain Card Generator (using Stable Diffusion AI)

🎨🖼️

For Blockchain & ICANN Domainers 🫡🫡

#BuildInPublic #AI #LLM #Python #JS #StableDiffusion twitter.com/DotDwebo/statu…

Prototype... (there's a bug. The previous image appeared for a while before the new image rendered)

Just for fun.

tools:

#Python, #ReplicateLLM, #AlpineJS, #TailwindCSS, and #AxiosJS.

Just for fun.

tools:

#Python, #ReplicateLLM, #AlpineJS, #TailwindCSS, and #AxiosJS.

Generate unlimited image variations for free with stable diffusion.

producthunt.com/posts/ai-image…

#ProductHunt #ai #stablediffusion #buildinpublic #photography #photoAI #ProductivityBoost #productlaunch

producthunt.com/posts/ai-image…

#ProductHunt #ai #stablediffusion #buildinpublic #photography #photoAI #ProductivityBoost #productlaunch

Exciting updates to #stablediffusion with Core ML!

- 6-bit weight compression that yields just under 1 GB

- Up to 30% improved Neural Engine performance

- New benchmarks on iPhone, iPad and Macs

- Multilingual system text encoder support

- ControlNet

github.com/apple/ml-stabl… 🧵

- 6-bit weight compression that yields just under 1 GB

- Up to 30% improved Neural Engine performance

- New benchmarks on iPhone, iPad and Macs

- Multilingual system text encoder support

- ControlNet

github.com/apple/ml-stabl… 🧵

Children's book of the day "Lola's flowers of love" generated by AI 🌻🌹- childbook.ai/book/s/lolas-f…

Story by user & chat GPT3.5

Illustrations by stablediffusion

#ai #webdevelopment #storybook #kidsbooks #GPT4 #stablediffusion #personalizedgifts #buildinpublic

Story by user & chat GPT3.5

Illustrations by stablediffusion

#ai #webdevelopment #storybook #kidsbooks #GPT4 #stablediffusion #personalizedgifts #buildinpublic

🌄🌆 Stable Diffusion as a tool is coming soon to <⚡️> SuperAGI 🚀

#ai #agi #ArtificialIntelligence #stablediffusion #imagegeneration #buildinpublic

#ai #agi #ArtificialIntelligence #stablediffusion #imagegeneration #buildinpublic

I have been experimenting with img to video generation on my 3060 ti.

Loving all the public tutorials out there. Decided to try it on one of my graduation photos 😂

Going to be posting some guides soon to get started.

#stablediffusion #buildinpublic #deforum

Loving all the public tutorials out there. Decided to try it on one of my graduation photos 😂

Going to be posting some guides soon to get started.

#stablediffusion #buildinpublic #deforum

Tonight I'm cooking GIT and Python. I need to clean up my code, folders, modules, models, dependencies, etc.

#buildinpublic #AIIA #AIart #aiartcommunity #StableDiffusion #DistropyAI

#buildinpublic #AIIA #AIart #aiartcommunity #StableDiffusion #DistropyAI

GirlfriendGPT reading a book at the park.

Think my SelfieGPT tool needs some more work 🤣

#stablediffusion #buildinpublic

Think my SelfieGPT tool needs some more work 🤣

#stablediffusion #buildinpublic

Face is the new hand for AI?

Any recommendations, how to fix this in stable diffusion?

#buildinpublic #stablediffusion

Any recommendations, how to fix this in stable diffusion?

#buildinpublic #stablediffusion

🚀Ai Tools Today🚀

Generate any image or avatar with text using StableDiffusion's AI-powered image creation and editing features. #textbasedimagegeneration #stablediffusion

#buildinpublic #indiedev #GPT4 @neuralcamapp

aitoolhunt.com/tool/neural.ca…

Generate any image or avatar with text using StableDiffusion's AI-powered image creation and editing features. #textbasedimagegeneration #stablediffusion

#buildinpublic #indiedev #GPT4 @neuralcamapp

aitoolhunt.com/tool/neural.ca…

Plan on building a product based on Stable Diffusion/ControlNet? 🧠

Hosting diffusion models yourself is hard and time consuming. Here are some services that can do it for you ⬇️:

#buildinpublic #stablediffusion #controlnet

Hosting diffusion models yourself is hard and time consuming. Here are some services that can do it for you ⬇️:

#buildinpublic #stablediffusion #controlnet

I'm curious, when it comes to fine-tune your SD models, what's your go-to platform?

Do you leverage the power of cloud services like AWS, or do you prefer harnessing the might of your local GPU 💻?

#buildinpublic #StableDiffusion

Do you leverage the power of cloud services like AWS, or do you prefer harnessing the might of your local GPU 💻?

#buildinpublic #StableDiffusion

De retour avec un nouveau portrait avec l'IA sur @YomiDenzel96

Niveau ressemblance c'est validé ?

Like ❤️ ou Retweet apprécié 🚀

#buildinpublic #stablediffusion #draw #ai #Dreambooth #intelligenceartificielle

Niveau ressemblance c'est validé ?

Like ❤️ ou Retweet apprécié 🚀

#buildinpublic #stablediffusion #draw #ai #Dreambooth #intelligenceartificielle

DragGAN's taking generative photo editing to the next level - Excited to see all the cool products #indiehackers will #buildinpublic with this!

🚀 Releasing in June, follow for updates: github.com/XingangPan/Dra…

#hustleGPT #stablediffusion #AI

🚀 Releasing in June, follow for updates: github.com/XingangPan/Dra…

#hustleGPT #stablediffusion #AI

An illustration of @Stromae that I made by training an AI model and some graphic design skills.

-Working environment on @runpod_io 💻

LIKE for part2 🚀

--

#runpod #stablediffusion #ai #art #stromae #graphiste #AIImage #Dreambooth #buildinpublic

-Working environment on @runpod_io 💻

LIKE for part2 🚀

--

#runpod #stablediffusion #ai #art #stromae #graphiste #AIImage #Dreambooth #buildinpublic

Was working on some very interesting mockups for a beer brand. Thought would share it with everyone here.

#d2c #generativeai #midjourney #stablediffusion #buildinpublic

#d2c #generativeai #midjourney #stablediffusion #buildinpublic

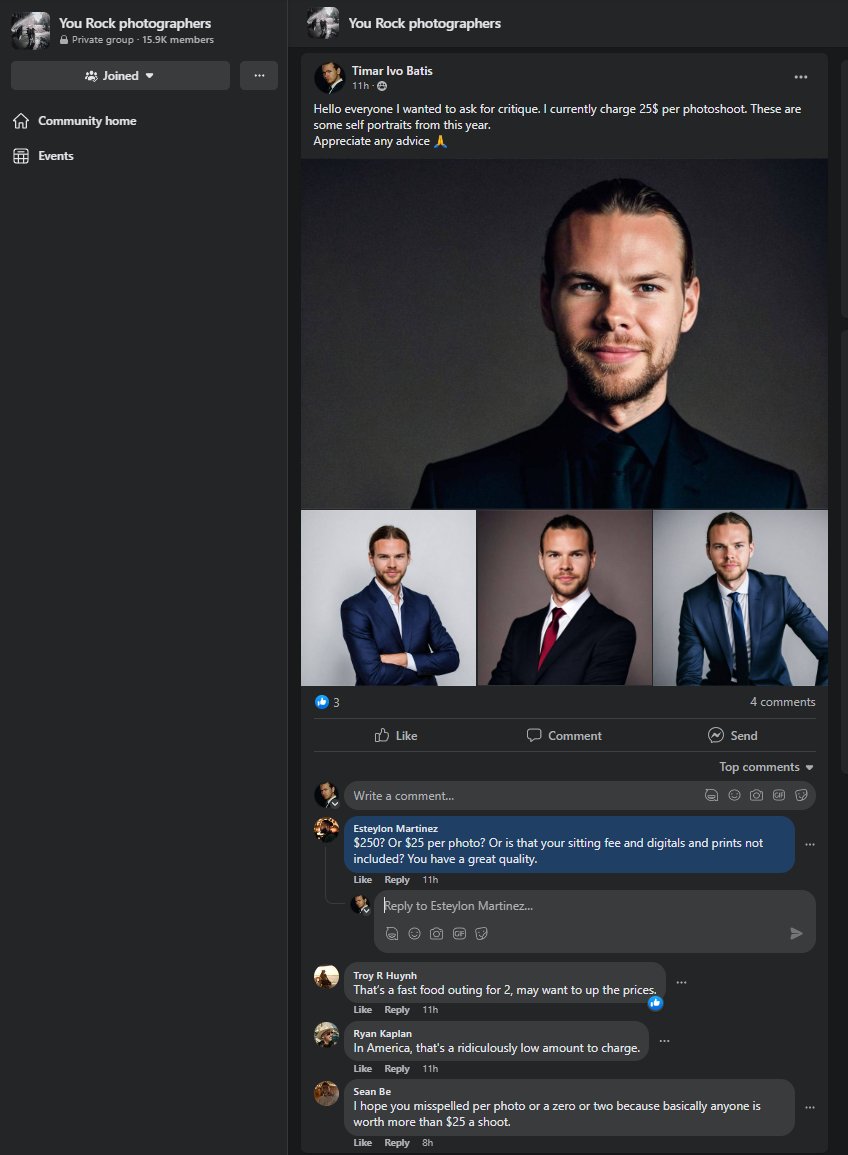

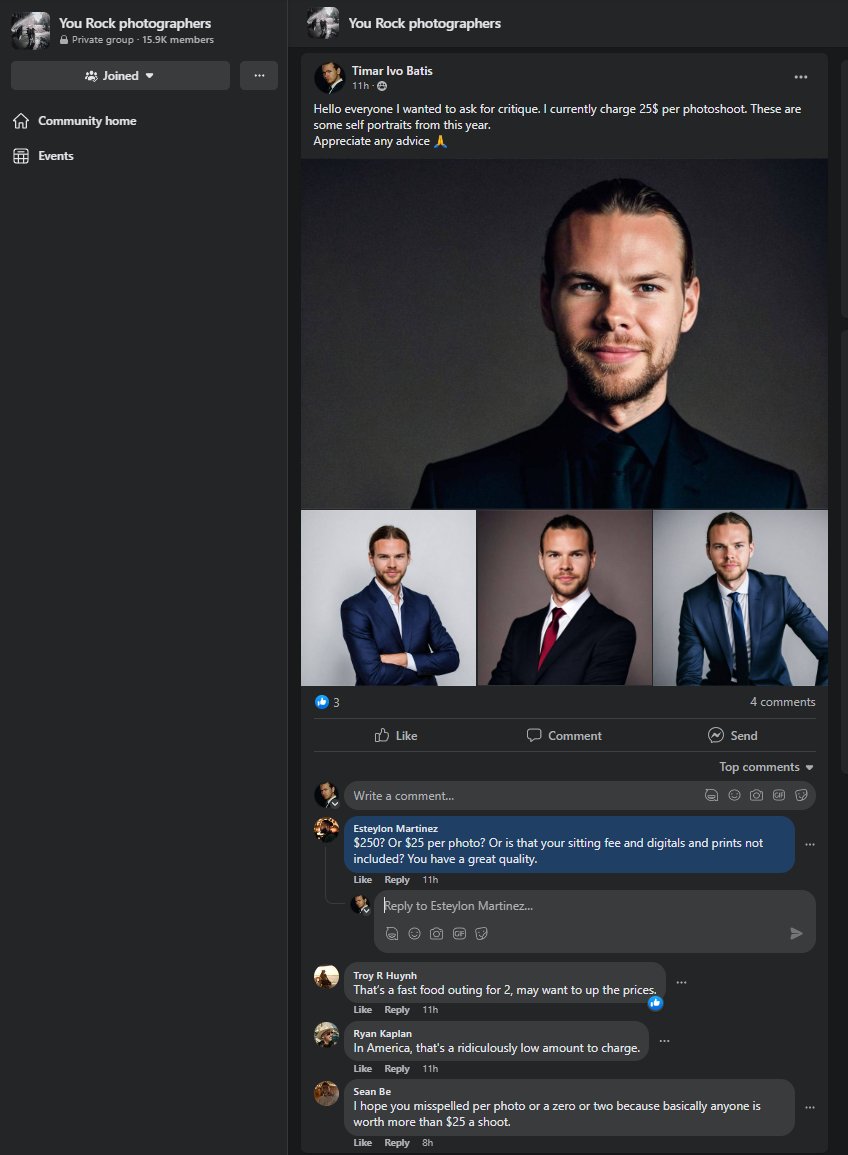

I asked a facebook group of real photographers to judge AI photos of me without telling them.

Nobody noticed they were AI generated with @photogenicai

I'll do this again soon.

#buildinpublic #aiphoto #aitools #stablediffusion #midjourney @_buildspace

Nobody noticed they were AI generated with @photogenicai

I'll do this again soon.

#buildinpublic #aiphoto #aitools #stablediffusion #midjourney @_buildspace

Je voit plusieurs applications nous proposé ce crée un Avatar à des prix plutôt élevés.

Je dev une solution qui permet d'entraîner son modèle à un coût plus intéressant.

Demo avec @daedalium.

❤️Si le projet intéresse

#stablediffusion #gpt4 #iA #buildinpublic

Je dev une solution qui permet d'entraîner son modèle à un coût plus intéressant.

Demo avec @daedalium.

❤️Si le projet intéresse

#stablediffusion #gpt4 #iA #buildinpublic

J'ai créé un Shorcut IOS qui permet d'entraîner un modèle de génération d'images directement sur son iPhone, petite demo avec une image généré sur @daedalium

#Dreambooth #stablediffusion #oussamaammar #intelligenceartificielle #buildinpublic

#Dreambooth #stablediffusion #oussamaammar #intelligenceartificielle #buildinpublic

Took the pics from @LinusEkenstam and put them through @photogenicai I think it did a good job 😁

#midjourney #stablediffusion #buildinpublic @_buildspace

twitter.com/LinusEkenstam/…

#midjourney #stablediffusion #buildinpublic @_buildspace

twitter.com/LinusEkenstam/…

I know I am late to Controlnet but this pretty epic....and I am just getting started.

#buildinpublic #founder #startup #controlnet #stablediffusion

#buildinpublic #founder #startup #controlnet #stablediffusion

Is it too late to embark on a weekend project creating an app that uses DreamBooth to generate AI models from your photos?🤔

#stablediffusion #AI #buildinpublic #iosdev #SwiftUI

#stablediffusion #AI #buildinpublic #iosdev #SwiftUI

You see img parameters now, use it as init img and upscale

@StableAIArt #stablediffusion #aiart #buildinpublic @_nightsweekends @_buildspace

@StableAIArt #stablediffusion #aiart #buildinpublic @_nightsweekends @_buildspace

This WE, I've been building a small tool to help me design my YouTube thumbnails out of crappy, bad quality, with wrong ratio, screenshots of a youtube video.

Here's how it turned out! 🔥

(left is original, right is my tool)

#buildinpublic #stablediffusion #midjourney

Here's how it turned out! 🔥

(left is original, right is my tool)

#buildinpublic #stablediffusion #midjourney

Ces images sont toutes de moi, et je les ai générées en utilisant un shortcut iOS que je développe actuellement.

Met un "😍" en commentaire pour faire partie des bêta testeur du shorcut.

#buildinpublic #indiehackers

#stablediffusion #Dreambooth #AIArtwork #graphiste #shorcut

Met un "😍" en commentaire pour faire partie des bêta testeur du shorcut.

#buildinpublic #indiehackers

#stablediffusion #Dreambooth #AIArtwork #graphiste #shorcut

#StarWars Pilot Luke generated with #stablediffusion LoRa

Testing out ideas for my #buildinpublic AI Meme Creator project..

How should I name this project? twitter.com/i/web/status/1…

Testing out ideas for my #buildinpublic AI Meme Creator project..

How should I name this project? twitter.com/i/web/status/1…

#StarWars Pilot Luke generated with #stablediffusion LoRa

Testing out ideas for my #buildinpublic AI Meme Creator project..

How should I name this project ?

Testing out ideas for my #buildinpublic AI Meme Creator project..

How should I name this project ?

#StarWars Pilot Luke generated with #stablediffusion LoRa

Testing out ideas for my #buildinpublic AI Meme Creator project..

How should I name this project ? twitter.com/i/web/status/1…

Testing out ideas for my #buildinpublic AI Meme Creator project..

How should I name this project ? twitter.com/i/web/status/1…

All software on the Metrotechs platform is now updated.

The free plan uses GPT 3.5 Turbo.

The paid plans use GPT4.

Image generator uses Stable Diffusion.

Speech to Text uses Whisper.

Text to Speech uses Amazon Polly.

#buildinpublic #OPENAI #stablediffusion #aws

The free plan uses GPT 3.5 Turbo.

The paid plans use GPT4.

Image generator uses Stable Diffusion.

Speech to Text uses Whisper.

Text to Speech uses Amazon Polly.

#buildinpublic #OPENAI #stablediffusion #aws

Damn I suck a product demos, I gotta get better at that.

Anyway, check it out! I can't wait to get this up and running! (in case that wasn't clear from the video).

#buildinpublic #ai #stablediffusion #productphotography

Anyway, check it out! I can't wait to get this up and running! (in case that wasn't clear from the video).

#buildinpublic #ai #stablediffusion #productphotography

I'm very close to releasing the new "Product Gallery" feature 😱

Check out a little product demo to get you going!

And start snapping pictures of your products because this is going live very soon!

vimeo.com/821646714?shar…

Got feedback? Share it below! 👇

Check out a little product demo to get you going!

And start snapping pictures of your products because this is going live very soon!

vimeo.com/821646714?shar…

Got feedback? Share it below! 👇

(6) Meme Creation Tool with something like #stablediffusion

#buildinpublic #buildinpublicmastery #IndieDevs

That's a wrap!

#buildinpublic #buildinpublicmastery #IndieDevs

That's a wrap!

photo of simon stalenhag,8k resoultion,hyper realstic ,white body car, day, 35mm film #buildinpublic #indiehackers #AIArtCommunity #future #digitalArts #AIArt #StableDiffusion apps.apple.com/app/magic-ai/i…

Massive update coming. 😍

#swift #buildinpublic #indiehackers #AIArtCommunity #DigitalArts #free #StableDiffusion #AICommunity twitter.com/burkanyilmaz/s…

#swift #buildinpublic #indiehackers #AIArtCommunity #DigitalArts #free #StableDiffusion #AICommunity twitter.com/burkanyilmaz/s…

I am using @runpod_io right now for hosting my stable diffusion-based serverless instances.

What are your reviews about their service? What are other people using?

#buildinpublic #generativeai #stablediffusion #serverless

What are your reviews about their service? What are other people using?

#buildinpublic #generativeai #stablediffusion #serverless

Finished hacking this little fun project. fundalytica.com/financial-fict… It was a good opportunity to code and do lots of sysadmin with ChatGPT. #vuejs #stablediffusion #openai #buildinpublic

Guess what?

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now train Stable Diffusion models in 8 minutes 😎

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

Do you want to generate your own AI images?

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

Guess what?

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now train Stable Diffusion models in 8 minutes 😎

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

Do you want to generate your own AI images?

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

👀 Want to create your own AI-generated images?

Look no further than Imaginator! 🔥 Now with Stable Diffusion 2.1, it's easier than ever.

Try it out at the link below! 🖼️

imaginator.developer-service.io

#aiart #ai #stablediffusion #AIArtistCommunity #buildinpublic

Look no further than Imaginator! 🔥 Now with Stable Diffusion 2.1, it's easier than ever.

Try it out at the link below! 🖼️

imaginator.developer-service.io

#aiart #ai #stablediffusion #AIArtistCommunity #buildinpublic

📷 Want to create your own AI-generated images?

Look no further than Imaginator!

📷 Now with Stable Diffusion 2.1, it's easier than ever.

Try it out at the link below! 📷

imaginator.developer-service.io

#aiart #ai #stablediffusion #AIArtistCommunity #buildinpublic

Look no further than Imaginator!

📷 Now with Stable Diffusion 2.1, it's easier than ever.

Try it out at the link below! 📷

imaginator.developer-service.io

#aiart #ai #stablediffusion #AIArtistCommunity #buildinpublic

Guess what?

You can now master the Stable Diffusion model training in just 8 minutes! 📷

Unleash your creativity and discover Dream Diffusion on RapidAPI! 📷📷📷

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now master the Stable Diffusion model training in just 8 minutes! 📷

Unleash your creativity and discover Dream Diffusion on RapidAPI! 📷📷📷

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now train Stable Diffusion models in 8 minutes 😎

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

Do you want to generate your own AI images?

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

Guess what?

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now train Stable Diffusion models in 8 minutes 😎

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

Do you want to generate your own AI images?

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

which stable diffusion API would be the fastest and affordable to use in a project?

I see there are many hosted models out there but I need some experienced advice.

stablediffusionapi.com

huggingface.co/stabilityai/st…

platform.stability.ai/docs/getting-s…

#buildinpublic #stablediffusion

I see there are many hosted models out there but I need some experienced advice.

stablediffusionapi.com

huggingface.co/stabilityai/st…

platform.stability.ai/docs/getting-s…

#buildinpublic #stablediffusion

Text Generation AI isn't inspiring me any more. So I am going to explore Image Generation now.

Thinking of starting on Stable Diffusion. Anyone has any tips for me?

#buildinpublic #stablediffusion

Thinking of starting on Stable Diffusion. Anyone has any tips for me?

#buildinpublic #stablediffusion

#stablediffusion 1.5 + #Dreambooth trained model results (num_inference_steps 100, guidance_scale 9, scheduler DDIM) #buildinpublic

Do you want to generate your own AI images?

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

Guess what?

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now train Stable Diffusion models in 8 minutes 😎

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

cute creatures, imaginative world, happy, bright, high details #buildinpublic #digitalart #aiartcommunity #stablediffusion #iOS17 apps.apple.com/app/magic-ai/i…

Do you want to generate your own AI images?

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic #AIArtworks

Guess what?

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now train Stable Diffusion models in 8 minutes 😎

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

Using the outpainting technique with stable diffusion and dalle 2 I was able to extend the edges of the images.

Some examples:

#buildinpublic #stablediffusion #Dalle2

Some examples:

#buildinpublic #stablediffusion #Dalle2

Guess what?

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now train Stable Diffusion models in 8 minutes 😎

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

Do you want to generate your own AI images?

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic

Do you want to generate your own AI images?

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic

Guess what?

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now train Stable Diffusion models in 8 minutes 😎

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

Do you want to generate your own AI images?

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic

Then try Imaginator, now with Stable Diffusion 2.1

imaginator.developer-service.io

#aiart #ai #aiartwork #stablediffusion #aiartcommunity #AIArtistCommunity #buildinpublic

Guess what?

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now master the Stable Diffusion model training in just 8 minutes! 🚀

Unleash your creativity and discover Dream Diffusion on RapidAPI! 😎💡🤖

rapidapi.com/nunobispo/api/…

#StableDiffusion #Dreambooth #AI #RapidAPI #buildinpublic

You can now train Stable Diffusion models in 8 minutes 😎

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

It also supports 3 different styles of training:

- Style

- Face

- Object

Model Training costs $2.5 per API call and Image generation costs $0.05 per API call

API available at: rapidapi.com/nunobispo/api/…

#stableDifusion

The cat's out of the bag! 🙀

If you're looking for a way to generate cheap professional-looking product photography 📸request a BetaKey now! 🔥🔥

landing.virtual-snap.com

#buildinpublic #stablediffusion

If you're looking for a way to generate cheap professional-looking product photography 📸request a BetaKey now! 🔥🔥

landing.virtual-snap.com

#buildinpublic #stablediffusion

Checking out #visualchatgpt tonight, come along for the ride while we #buildinpublic.

Microsoft published an interactive chat image-editing interface with #chatgpt and #stablediffusion. Check out the demo:

Microsoft published an interactive chat image-editing interface with #chatgpt and #stablediffusion. Check out the demo:

Built some tooling for @photogenicai

Generating the same photo in 3 different models for rapid evaluation.

It took a while to build the infrastructure but it's a delight to see 30 manual steps automated. 10min => 30 sec

#buildinpublic #stablediffusion #aiphotography #aiart

#AI

Generating the same photo in 3 different models for rapid evaluation.

It took a while to build the infrastructure but it's a delight to see 30 manual steps automated. 10min => 30 sec

#buildinpublic #stablediffusion #aiphotography #aiart

#AI

Week 10 ✨(@Illusionai_ ):

* Open to the public

* Invite friends and foes

* Getting customer feedback

* Fix the majority of bugs

* Stabilized AI engine

* First customer 🚀

#generativeai #openai #ai #chatgpt #midjourneyAi #midjourneyV4 #stablediffusion #buildinpublic #gpt3 #gpt4

* Open to the public

* Invite friends and foes

* Getting customer feedback

* Fix the majority of bugs

* Stabilized AI engine

* First customer 🚀

#generativeai #openai #ai #chatgpt #midjourneyAi #midjourneyV4 #stablediffusion #buildinpublic #gpt3 #gpt4

Just learned how to create fire prompts for AI images

Prompts are legit just magic spells lol.

Top G if you know the two figures below.

Can you guess what prompts I must have used?

#buildinpublic #stablediffusion #ChatGPT

Prompts are legit just magic spells lol.

Top G if you know the two figures below.

Can you guess what prompts I must have used?

#buildinpublic #stablediffusion #ChatGPT

Attending the AI Hackathon hosted by @NirantK in 30 mins

Suggest an idea/problem that you face and think can be solved using AI/NLP/ChatGPT/Stable Diffusion?

#Hackathon #stablediffusion #LLM #OpenAI #ChatGPT #gpt3 #idea #crowdsourcing #TwitterSmarter #buildinpublic

Suggest an idea/problem that you face and think can be solved using AI/NLP/ChatGPT/Stable Diffusion?

#Hackathon #stablediffusion #LLM #OpenAI #ChatGPT #gpt3 #idea #crowdsourcing #TwitterSmarter #buildinpublic

Hosting Deep Coffee for folks interested in hacking with @LangChainAI, @huggingface or @StabilityAI SD in Bengaluru this Friday!

Come hack and if you ship, I'll buy you coffee!

partiful.com/e/ZHSDPXfv63fa…

Come hack and if you ship, I'll buy you coffee!

partiful.com/e/ZHSDPXfv63fa…

Today we have launched our product on Product Hunt.🔥 We would be very grateful for any support🙏 💚

producthunt.com/posts/childboo…

#ai #gpt3 #producthunt #launch #buildinpublic #dalle #stablediffusion #buildinginpublic #OpenAI

producthunt.com/posts/childboo…

#ai #gpt3 #producthunt #launch #buildinpublic #dalle #stablediffusion #buildinginpublic #OpenAI

Today we are launching our product on Product Hunt.🔥 We would be very grateful for upvotes, comments, and any feedback. 🙏 💚

producthunt.com/posts/childboo…

#ai #gpt3 #producthunt #launch #buildinpublic #dalle #stablediffusion #buildinginpublic #OpenAI

producthunt.com/posts/childboo…

#ai #gpt3 #producthunt #launch #buildinpublic #dalle #stablediffusion #buildinginpublic #OpenAI

Built a Startup in 4 hours. ⚡

Bought a Domain ✅

Build a Landing Page ✅

Created Twitter Page ✅

Created Linkedin Page ✅

Get yourself Professional AI Photos with ProSnapAi.xyz

#buildinpublic #ai #startups #stablediffusion #aiartcommunity

Bought a Domain ✅

Build a Landing Page ✅

Created Twitter Page ✅

Created Linkedin Page ✅

Get yourself Professional AI Photos with ProSnapAi.xyz

#buildinpublic #ai #startups #stablediffusion #aiartcommunity

Now, I'm using this little dashboard to monitor all the model finetuning and image generation jobs 👀

Things I can do:

✔️ Monitor all the jobs under one dashboard

✔️ Get notified when any job fails

✔️ Auto/Manual restart failed jobs

#buildinpublic #stablediffusion #Dreambooth

Things I can do:

✔️ Monitor all the jobs under one dashboard

✔️ Get notified when any job fails

✔️ Auto/Manual restart failed jobs

#buildinpublic #stablediffusion #Dreambooth

Download the latest version to use Stable Diffusion v2.1 from your iPhone and iPad! Create unique art in seconds!

#stablediffusion #aiart #aiartgenerator #aiartcommunity #ios #app #buildinpublic

apps.apple.com/app/apple-stor…

#stablediffusion #aiart #aiartgenerator #aiartcommunity #ios #app #buildinpublic

apps.apple.com/app/apple-stor…

Week 6 ✨:

* Internal API

* API key per account

* OpenAI integration

* Report a bug / contact us page

* 3 first templates

* MVP is ready 🫶

#generativeai #openai #ai #chatgpt #midjourneyAi #midjourneyV4 #stablediffusion #buildinpublic #gpt3 #gpt4

Follow @Illusionai_

* Internal API

* API key per account

* OpenAI integration

* Report a bug / contact us page

* 3 first templates

* MVP is ready 🫶

#generativeai #openai #ai #chatgpt #midjourneyAi #midjourneyV4 #stablediffusion #buildinpublic #gpt3 #gpt4

Follow @Illusionai_

hello #buildinpublic founders, if you are having hard time hosting stable diffusion or any open source in your infra(AWS, GCP, Azure account), hit me up.. I can save your time and money.. #stablediffusion #ChatGPT #GenerativeAI

@igcorreia You can sign-up here: illusion.ws #generativeai #openai #ai #chatgpt #midjourneyAi #midjourneyV4 #stablediffusion #buildinpublic #gpt3 #gpt4

Week 5 ✨:

* Pricing Page

* Checkout flow (Stripe Checkout)

* Pricing plans - Starting at 12.99 for now

* Default credits per user: 10

* Upgrade CTA's

#generativeai #openai #ai #chatgpt #midjourneyAi #midjourneyV4 #stablediffusion #buildinpublic #gpt3 #gpt4

Follow @Illusionai_

* Pricing Page

* Checkout flow (Stripe Checkout)

* Pricing plans - Starting at 12.99 for now

* Default credits per user: 10

* Upgrade CTA's

#generativeai #openai #ai #chatgpt #midjourneyAi #midjourneyV4 #stablediffusion #buildinpublic #gpt3 #gpt4

Follow @Illusionai_

Free to use, only AI features need pay it. #buildinpublic #indiedevhour #saas #stablediffusion #aigc

AiCanvas is published on the Producthunt and need your support. #buildinpublic #indiedeveloper #stablediffusion #saas

AiCanvas: AIGC meets canvas, svg and animations result video producthunt.com/posts/aicanvas via @producthunt

AiCanvas: AIGC meets canvas, svg and animations result video producthunt.com/posts/aicanvas via @producthunt

AiCanvas is published on the Producthunt and need your support. #buildinpublic #indiedeveloper #stablediffusion #saas

AiCanvas: AIGC meets canvas, svg and animations result video producthunt.com/posts/aicanvas via @producthunt

AiCanvas: AIGC meets canvas, svg and animations result video producthunt.com/posts/aicanvas via @producthunt

When I combine #ai with #ar this happen :) What you think?

#stablediffusion #buildinpublic #avatarapp

#stablediffusion #buildinpublic #avatarapp

Just figured out how to create more intricate #StableDiffusion prompts and my experiments produced some pretty amazing visuals - check out these awesome pics! Crafting prompts is like using magic spells 🧙♂️ #buildinpublic #AI

@_buildspace

@_buildspace

Do anyone know which AI model tiktok AI portraits are using?

Results are as good as dreambooth but they are training with just one photo and within seconds.

#buildinpublic #stablediffusion

Results are as good as dreambooth but they are training with just one photo and within seconds.

#buildinpublic #stablediffusion

The user interface for designers & creators to truly put AI into the everyday workflow

#stablediffusion #buildinpublic #ChatGPT #AIart #DesignThinking #interiordesign #interiordesigner

#stablediffusion #buildinpublic #ChatGPT #AIart #DesignThinking #interiordesign #interiordesigner

The true place to brainstorm and iterate with AI in your everyday design work @FabrieDesign

youtube.com/watch?v=5kAHSv…

#stablediffusion #design #buildinginpublic #buildinpublic #darkart #designer #GraphicDesigner

youtube.com/watch?v=5kAHSv…

#stablediffusion #design #buildinginpublic #buildinpublic #darkart #designer #GraphicDesigner

Week 4 ✨:

* OG: Title

* OG: Image

* OG: Description

* Add Terms of Service

* Add Privacy Policy

* Create a Campaign in HubSpot

* Create a Welcoming Email

* Beta Signup

#generativeai #openai #ai #chatgpt #midjourneyAi #midjourneyV4 #stablediffusion #buildinpublic #gpt3 #gpt4

* OG: Title

* OG: Image

* OG: Description

* Add Terms of Service

* Add Privacy Policy

* Create a Campaign in HubSpot

* Create a Welcoming Email

* Beta Signup

#generativeai #openai #ai #chatgpt #midjourneyAi #midjourneyV4 #stablediffusion #buildinpublic #gpt3 #gpt4